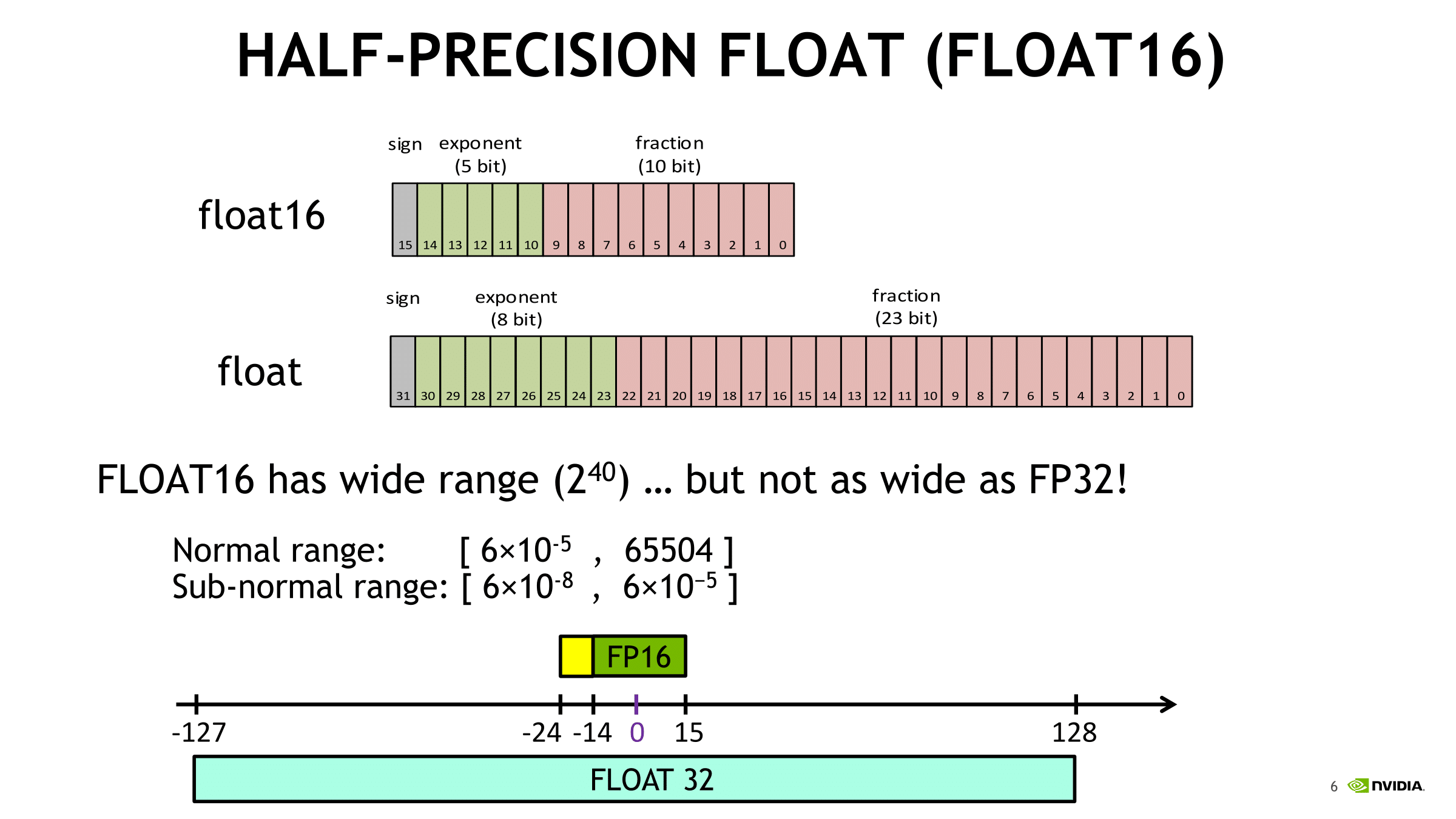

Fast Solution of Linear Systems via GPU Tensor Cores' FP16 Arithmetic and Iterative Refinement | Numerical Linear Algebra Group

FPGA's Speedup and EDP Reduction Ratios with Respect to GPU FP16 when... | Download Scientific Diagram

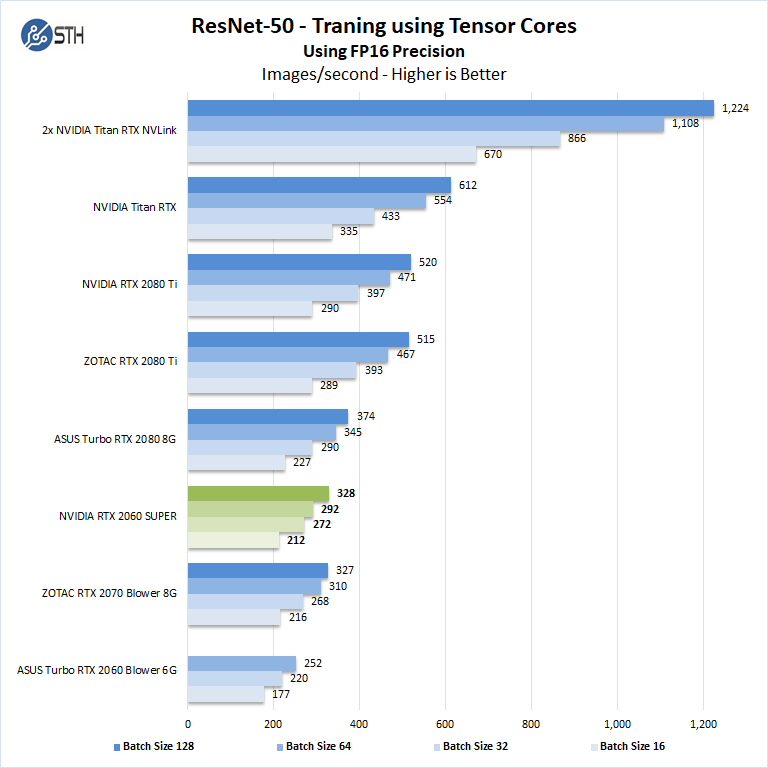

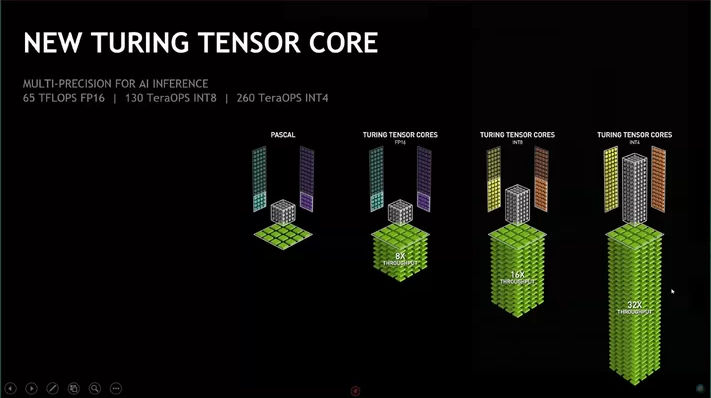

Revisiting Volta: How to Accelerate Deep Learning - The NVIDIA Titan V Deep Learning Deep Dive: It's All About The Tensor Cores